|

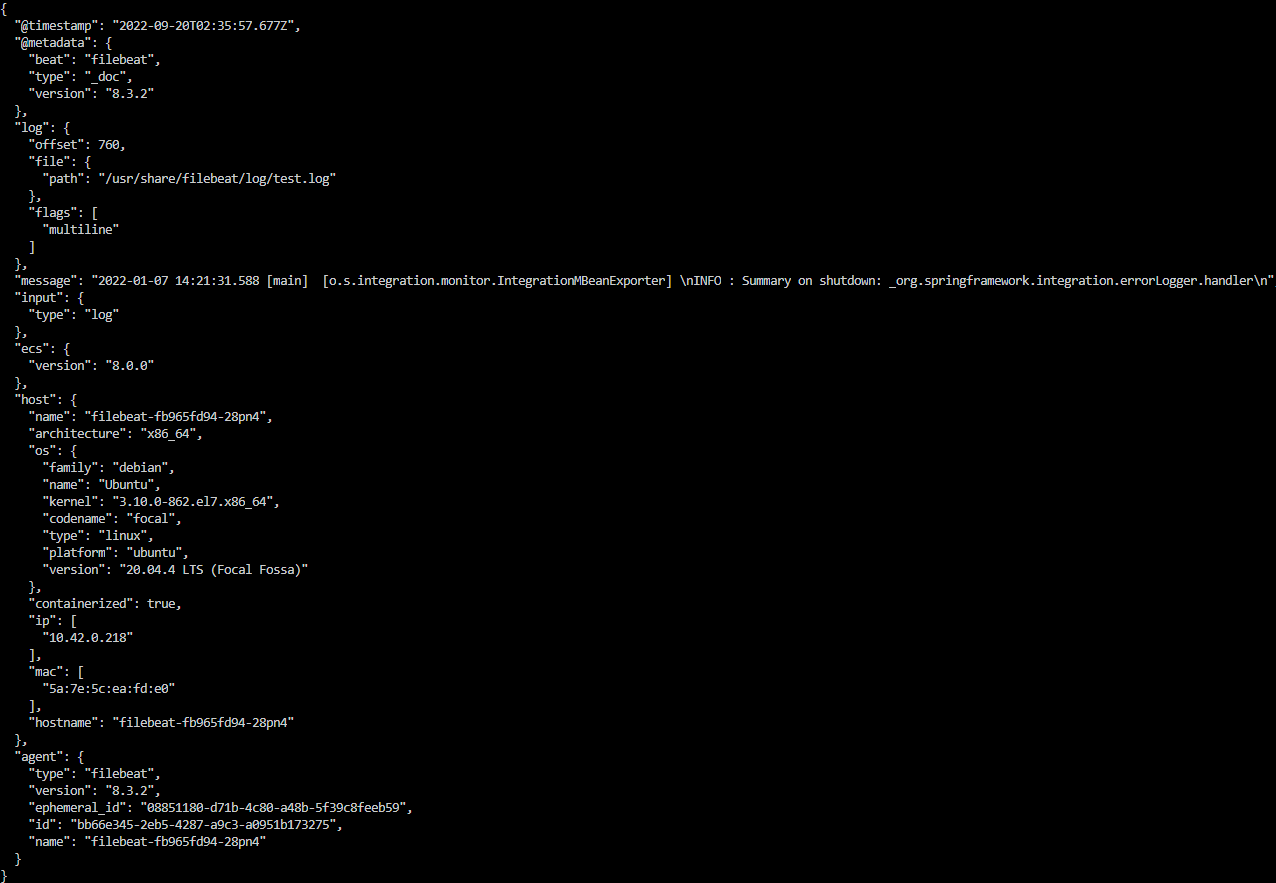

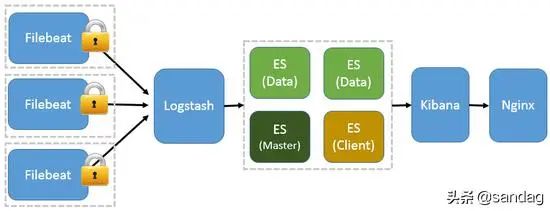

: `field` must be non-nullĪt java.base/(Objects.java:246)Īt .(Struct.java:474)Īt .(Struct.java:418)Īt .(Struct.java:436)Īt .(ProduceResponse.java:281)Īt .(AbstractResponse.java:35)Īt .(RequestContext.java:80)Īt (KafkaApis.scala:2892)Īt $2(KafkaApis.scala:554)Īt .$anonfun$handleProduceRequest$11(KafkaApis.scala:576)Īt .$anonfun$handleProduceRequest$11$adapted(KafkaApis.scala:576)Īt (ReplicaManager.scala:546)Īt (KafkaApis.scala:577)Īt (KafkaApis.scala:126)Īt (KafkaRequestHandler.scala:70)Īt java.base/(Thread. Kafka comes in to play when we are logging at scale. You can direct your filebeat logs towards logstash.

Why Kafka ELK would be sufficient if there aren't enough logs to process. Start Kafka before start filebeat to listen publish events and configure filebeat with same kafka server port Kafka Output Required Configuration : Comment out output.elasticsearch output section and uncomment output. ERROR Error when handling request: clientId=beats, correlationId=102, api=PRODUCE, version=2, body= () Kafka is an open source software for storing,reading and analysing stream of data. EDIT: based on the new information, note that you need to tell filebeat what indexes it should use. You can also crank up debugging in filebeat, which will show you when information is being sent to logstash. Check /.filebeat (for the user who runs filebeat). Define a Logstash instance for more advanced processing and data enhancement. There are a wide range of supported output options, including console, file, cloud, Redis, Kafka but in most cases, you will be using the Logstash or Elasticsearch output types.

Otherwise, SASL authentication is disabled. This section in the Filebeat configuration file defines where you want to ship the data to. If chanism is not set, PLAIN is used if username and password are provided. InvalidRecordException: Inner record LegacyRecordBatch(offset=0, Record(magic=1, attributes=0, compression=NONE, crc=1453875406, CreateTime=1583471854475, key=0 bytes, value=202 bytes)) inside the compressed record batch does not have incremental offsets, expected offset is 1 in topic partition test-3-0. Filebeat keeps information on what it has sent to logstash. The SASL mechanism to use when connecting to Kafka. This section shows how to set up Filebeat modules to work with Logstash when you are using Kafka in between Filebeat and Logstash in your publishing pipeline. ERROR Error processing append operation on partition test-3-0 ()

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed